Several years ago, we were upgrading our phone system at school. This work involved a much-needed voicemail upgrade, and I was holding up the process.

The voicemail software was expensive. I don’t remember the exact cost, but I think it was around $30,000. We needed to sign off on the terms of service — basically click “I agree” to all the legal gobbledygook — so the installer could get it configured and deployed for us.

The problem was that I read the terms of service. In addition to signing away all of our legal rights, it contained the standard software disclaimer. The software manufacturer disclaimed all warranties, express or implied, including any warranties of merchantability or fitness for any particular purpose. I refused to sign it.

The installer didn’t understand. “I need the voicemail software that meets our needs,” I remember saying.

“Right. This is is. We just need you to agree to the terms.”

“No. The terms say this software publisher won’t guarantee that it does they say it does. I want the version that does the voicemail stuff, and that they’re actually willing to stand behind.”

“Yes. This is it. This software works great. The company stands behind it 100%. You’re holding up the project. Just sign the agreement.”

“If this software doesn’t work, my board of education is going to want to know why I spent $30 grand on something that specifically says it doesn’t do what the marketing materials say it does.” I also wanted to talk about the arbitration clause, but I knew I was pushing my luck.

In the end, I dragged my feet for a month. When they’d ask for an update, I’d ask for the version of the terms of service that actually guarantee the product. Then I wouldn’t hear from them for another week. Eventually, the business manager signed the terms of service, and the project moved forward. So yes, we bought software that specifically says it doesn’t do the thing we bought it to do.

Actually, we do that all the time. This is from Adobe’s license agreement:

The Services and Software are provided “AS-IS.” To the maximum extent permitted by law, Adobe… disclaim all warranties, express or implied, including the implied warranties of non-infringement, merchantability, and fitness for a particular purpose. The Covered Parties make no commitments about the content within the Services. The Covered Parties further disclaim any warranty that (A) the Services and Software will meet your requirements or will be constantly available, uninterrupted, timely, secure, or error-free; (B) the results obtained from the use of the Services and Software will be effective, accurate, or reliable; (C) the quality of the Services and Software will meet your expectations; or (D) any errors or defects in the Services and Software will be corrected.

Adobe General Terms of Use

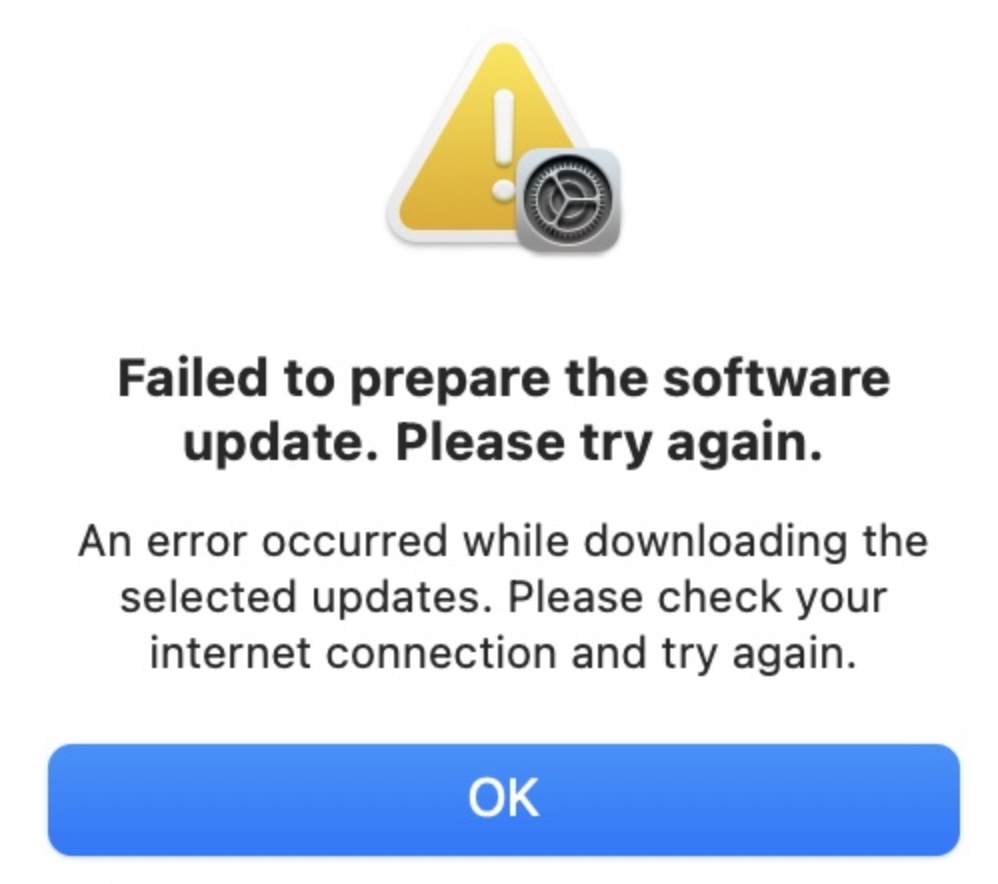

Microsoft’s says the same thing. So does Apple’s. This is nothing new, and there’s really nothing we can do about it. The only remedy we have is to not use the software. And, by extension, to not use the hardware that requires the software. If I don’t agree to the TOS in the Apple update, I can’t use my Apple device anymore. And even if that were a reasonable possibility, there’s no way I’m getting my money back.

Again, this isn’t new. The technology industry has lived in this bubble of irresponsibility for a generation or more. We give them money. They let us use software. That software is buggy, corrupts data, and introduces security vulnerabilities. They provide updates to fix some of those problems. But the updates introduce new problems. All of the responsibility for maintaining the updates (and, in some cases, paying for them) rests on the customer. It’s an abusive relationship. Maybe if I set up automatic updates and upgrade to the latest phone and paid for extended support, he won’t come home drunk and violent so often.

That might be going too far.

But this fairyland of software unaccountability is starting to spill over into other areas, with more serious implications. Tesla is heading to court this week to defend itself against five lawsuits related to crashes caused by its self-driving technology. Four of those were fatal. One involved a Tesla driving into a group of police officers who were engaged in a traffic stop. NHTSA takes safety seriously, and for good reason. The American public has grown to expect that cars meet reasonable safety standards. But buried somewhere in the terms of service, there’s probably a clause that says something like, “we don’t guarantee that your car won’t drive itself into a group of cops trying to do their jobs, and it’s your fault if it does.” It’ll be interesting to see how the courts handle this.

And that’s not the only case. Remember the problems with the Boeing 737 MAX? The Maneuvering Characteristics Augmentation System — software that Boeing didn’t even tell the pilots about — caused a serious of crashes and hundreds of fatalities. The entire fleet was grounded for nearly two years while Boeing fixed the problem.

Software is embedded in all of our infrastructure. It’s running our mass transit systems, monitoring nuclear power plants, facilitating medical procedures, and managing our defense systems. It’s sitting under our communications tools and our financial systems. If software companies can continue to eschew all responsibility for their code, then we can’t trust their code to do important things.

Last December, scientists fixed a problem with the Voyager I probe. It was sending corrupted data back to Earth. They dug the old manuals out of someone’s garage and found the problem. Telemetry data was being routed through a computer that had a hardware failure. They sent a couple commands to re-route that data, and it’s back to working correctly. It’s a probe that’s 15 billion miles away. It hasn’t been touched by human hands since 1977. That’s 45 years without a software update. No “unplug it and plug it back in.” It didn’t need a hardware reset, or a firmware update. It just works the way it was designed to work. Because it was designed to work.

We should do that with the software we use here on Earth, too.