Artificial Intelligence is here. It’s embedded in the tools we use every day. There’s no practical way to block it in schools, and there’s no reliable method for detecting its use. We know our students are going to use it whether we want them to or not, and we know that there are lots of issues around privacy, safety, ethics, and security that we should be aware of. Still, it has the potential to be one of the rare, truly transformational technologies that affect how we live, work, and interact. So where do we go from here?

Proceed slowly and cautiously

That’s an unusual thing for a tech guy to say. The technology industry thrives on fast-paced innovation. If we don’t jump on this now, we’re going to miss out. But Martin Weller points out that one of the greatest strengths of educational institutions is their resilience to change. We ask a lot of questions. We don’t always assume that “latest” implies “greatest”. That doesn’t mean we don’t innovate. But we want to see some evidence that changing direction is in the best interests of our students, communities, and institutions. This approach is why our schools have been around for hundreds of years. You’re not turning to Ask Jeeves for your web searches anymore. You’re not using MySpace. You don’t have a Compaq computer. Your Blackberry is a dinosaur. But you’re attending the same schools your grandparents attended. Don’t let the technology industry create a false sense of urgency. In ten years, most of them will be gone. We’ll figure out this AI stuff. But it’ll be in a way that benefits our students and our schools, and it’ll be on our terms.

It’s interesting to note that our leaders really don’t have this figured out yet, either. The Office of Educational Technology at the US Department of Education issued a report called “AI and the Future of Teaching and Learning” back in May. Their primary message is that we should use AI to improve student engagement and to differentiate learning experiences. Those are the same goals for nearly ALL educational technology. Meanwhile, the Consortium for School Networking recognizes that AI is a promising technology, but it’s one that’s not designed for schools or children, with long term effects uncertain. Proceeding cautiously is their primary recommendation. And the Ohio Department of Education seems focused on teaching “AI Literacy,” assuming that whatever skills we teach our students now will still be relevant in 5-10 years when they’re looking for jobs in a field that has reinvented itself multiple times in the past year.

Have conversations about cheating and grades

In one of many AI conversations I’ve attended in recent months, Monash University professor Tim Fawns summed up the next steps pretty succinctly: “What we probably need to do over the long term is all the stuff that we’ve been saying we need to do anyway.” Do our grades reflect what students have learned or what they have done? If we’re using grades as an extrinsic motivator to get our students to do the things we want them to do, we’re in trouble. Many of the things we ask our students to do are things that AI can do very easily, and we can’t tell if they’re using AI to do them. Summative assessments probably need to be done in class on paper. Ideally, formative assessments are an insignificant part of the student’s grade, and there’s no advantage for them to cheat on them.

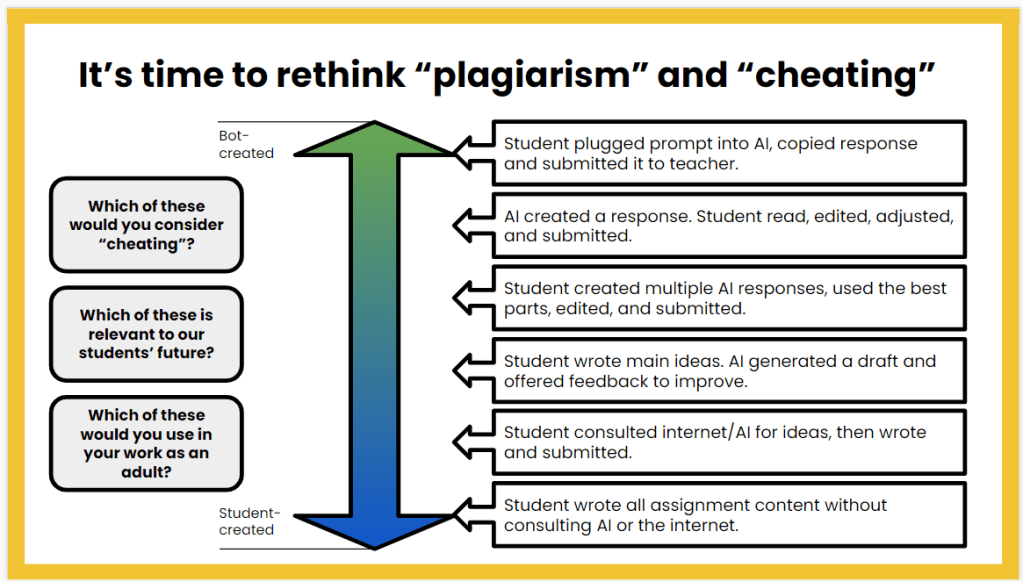

And speaking of cheating, what does that mean, exactly? This slide has stuck with me since I saw it embedded in a presentation last winter:

We need to figure out where the line is now, and we have to reach some consensus about it. It’s not fair for the teacher to determine, after the assignment is submitted, where that line is. We need conversations at the building level to come to a common understanding of what’s okay and what’s not. And that’s going to largely depend on your instructional goals.

Continue to emphasize academic rigor

When I went to school, that was where the knowledge was. The teacher was the expert. And if the teacher didn’t know, it was in the textbook. If it wasn’t in the textbook, it wasn’t worth knowing. That’s not all we did in school. I’m vastly oversimplifying here. But “remembering stuff” played a huge role in K-12 education in the 20th century.

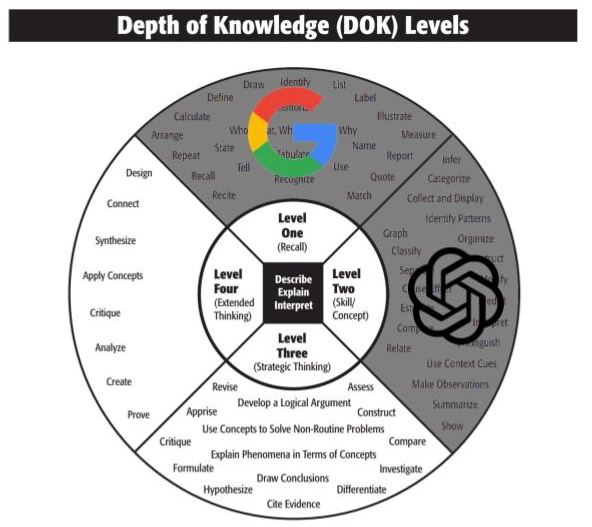

The combination of Google and smart phones changed the game. While it’s still important to know things, remembering stuff is not nearly as critical as it was a generation ago. We’ve actively worked to increase the academic rigor of our classes. Instead of focusing on memorize, identify, list, recite, illustrate, and report, we spend more time on classify, organize, interpret, predict, distinguish, relate, and summarize. This is the second level of Webb’s Depth of Knowledge.

But the AI tools are doing those things for us now, too. Over time, those skills will diminish in importance just as the recall skills have. The next step is strategic thinking. We should increase our emphasis on skills like assess, revise, critique, construct, and hypothesize.

In its purest form, differentiation involves adjusting the academic rigor to the needs of the student. We aim for DOK 2 with most instruction. For students who are struggling, we drop that to DOK 1 for a particular unit or assignment. For students who are excelling, we elevate to DOK 3 and challenge them to do more with their learning. AI gives us the opportunity to move all of it up, and begin by targeting DOK 3 for most instruction. But if you’re still focusing on DOK 1 for a lot of your learning tasks, it’s time to rethink that approach.

Leverage AI to make our jobs easier

This kind of goes back to the cheating conversation earlier. But if I can use AI to take care of some of the more repetitive, routine tasks in my job, why shouldn’t I do that? I don’t really use it for writing, because I like writing, and I’m fussy about my words. But other people would likely see a huge benefit in that area, and I’ve seen it help people overcome the paralyzing effect of a flashing cursor on a blank screen. I have used it to write code, including one case where I asked it to re-write a PHP function as a BASH script, and it saved me about an hour’s work. I’ve also used it to summarize large blocks of text that I didn’t really want to wade through.

There are lots of tools and articles and news reports and videos about how teachers are leveraging AI to make their work easier. Maybe we need to use AI to help summarize and synthesize all of those resources. I think the key is to pay some attention to what others are doing, to stay open-minded about different approaches, and to be willing to try new things to see what works well for us.

Recognize That It’s All Going to Change

I could spend a couple hours every day on AI, just trying to keep up. I’m not an expert on the latest tool or app, or the newest version of whatever engine, and how awesome it is compared to last month’s version, which was the greatest thing ever. Maybe next week there will be a new tool that can detect when students are using AI, and prevent it. But next month, there will be another new app that can work around it. Maybe we’ll find that AI is dangerous and unreliable, and we’ll all stop using it entirely. We’re in a time when all of the assumptions about what the technological world looks like are up for debate. It’s unsettling. It’s exhausting. And it’s exciting.